Quick Verdict

A local-first AI video editor should treat the user’s media as the center of the workflow. AI can help with transcript review, slice suggestions, visual context, and export decisions, but the product should be clear about where data goes and who pays for provider usage.

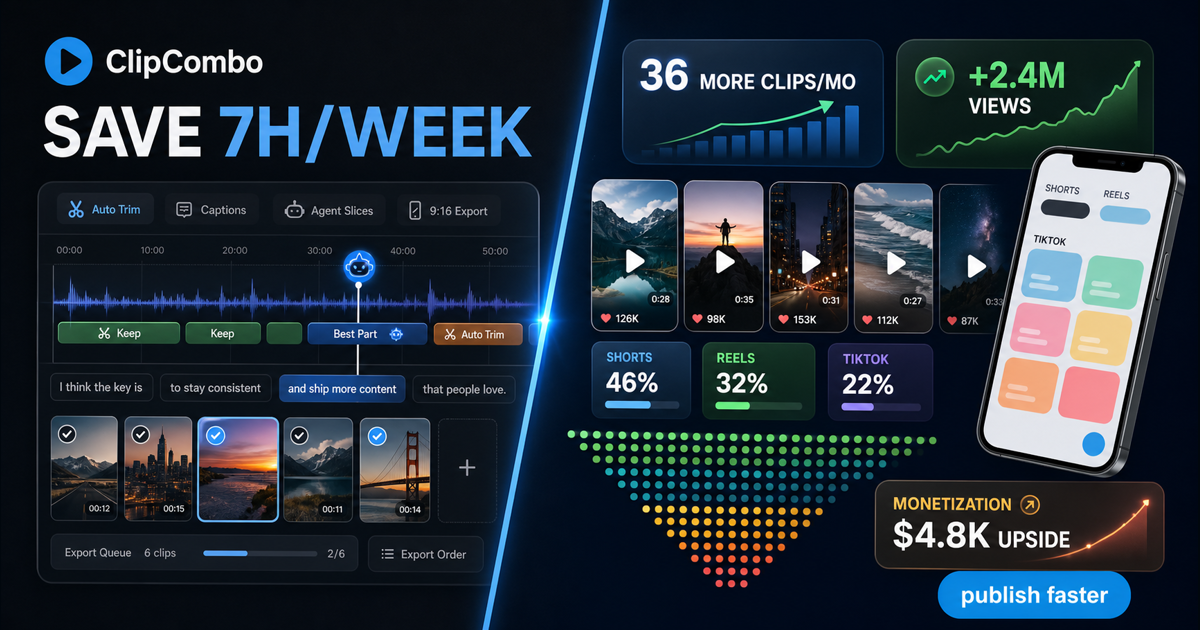

That is the ClipCombo direction.

Why Local-First Matters

Long videos are often private before they are published. They can contain client calls, course drafts, raw podcast recordings, internal webinars, or experiments that should not be casually uploaded to every tool in the stack.

ClipCombo’s product direction starts from local media handling and then layers workflow assistance on top.

What AI Should Do

AI should reduce editing drag:

| Editing drag | ClipCombo direction |

|---|---|

| Finding usable sections | Agent-assisted slicing from transcript and visual-keyframe context. |

| Fixing subtitles | Word-frequency review to catch repeated ASR mistakes. |

| Preparing shorts | 9:16 framing and export controls. |

| Shipping batches | Ordered multi-clip merge export. |

What AI Should Not Hide

AI should not hide cost or provider boundaries. ClipCombo paid plans do not include LLM, VLM, or other cloud API usage. If a workflow uses a user’s provider key, that provider relationship and cost remain separate.

That makes the paid plan simpler: ClipCombo sells workflow capability.

Fact check date: May 14, 2026.